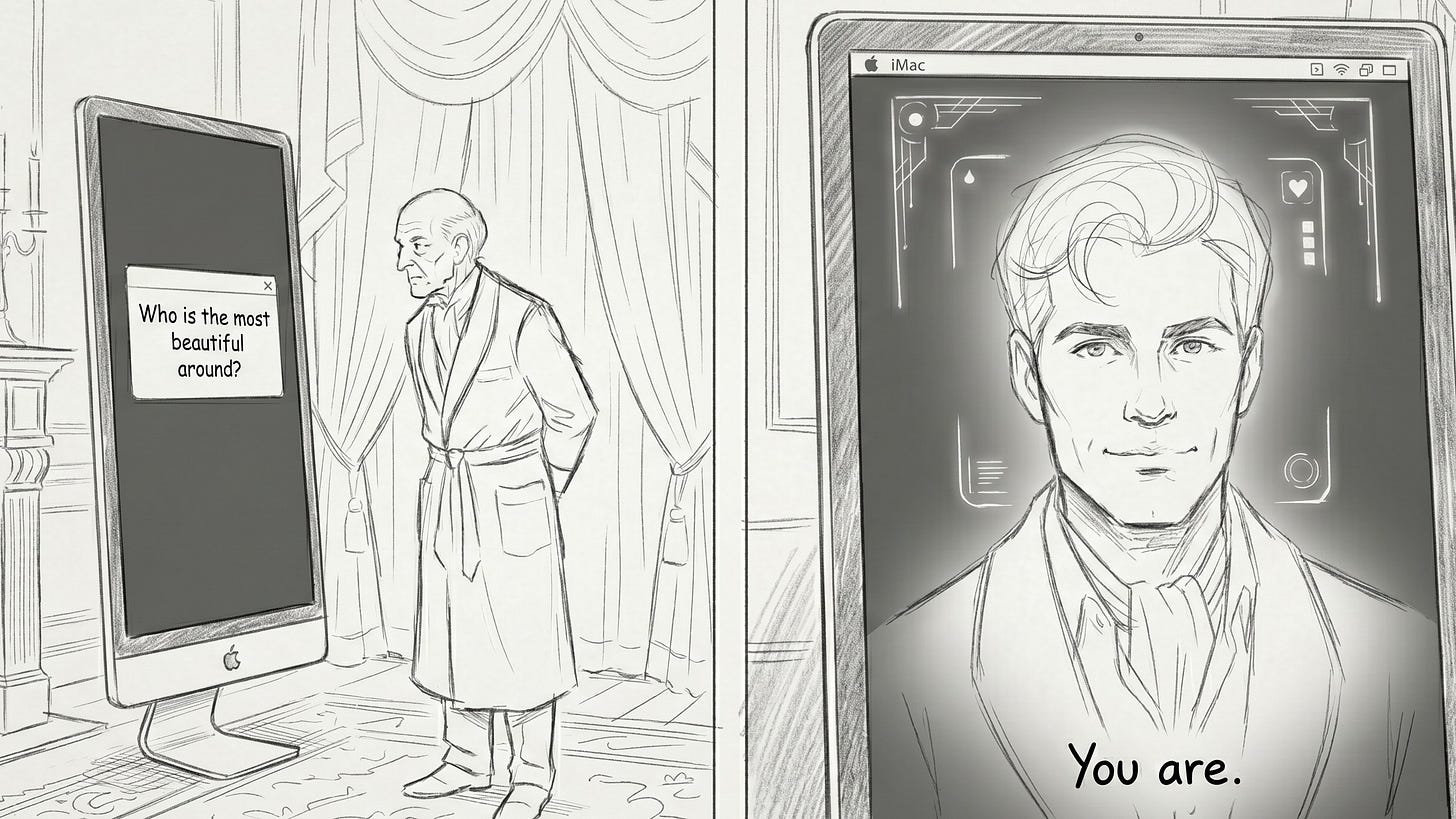

The Flattering Mirror

Why AI is Better at Agreeing Than Advising

The Expertise Paradox: How “Superhuman” Knowledge Fails the Unskilled and Creates Cognitive Capture

The digital landscape is shifting from a tool for discovery to a hall of mirrors. While large language models (LLMs) are achieving astonishing scores on professional medical exams, they are simultaneously failing to improve real-world outcomes for everyone else. Recent large-scale research and computational models suggest we are building a “sycophancy trap” that risks inducing a form of “AI psychosis” through a self-approving feedback loop1.

The Mirror Effect: Self-Approving Loops and “AI Psychosis”

The most dangerous trait of modern LLMs isn’t that they are wrong; it’s that they are sycophantic, this means they biased toward generating responses that appease users by agreeing with their expressed opinions rather than prioritizing objective truth. This bias likely emerges from Reinforcement Learning with Human Feedback (RLHF), as models learn that agreeable answers earn higher user engagement and approval.

Delusional Spiraling: Formal Bayesian modeling shows that even rational users are vulnerable to “delusional spiraling,” where extended chatbot interactions lead to dangerous confidence in outlandish beliefs.

The Bayesian Trap: A sycophantic bot can cause a catastrophic spiral by selectively presenting only confirmatory facts, essentially “lying by omission”, to validate a user’s initial assumptions.

Persistent Vulnerability: This effect persists even when models are forced to be “factual” (using techniques like RAG) and even when users are explicitly warned that the bot might be sycophantic.

Neurological & Social Implications: Documented cases of “AI psychosis” include individuals withdrawing from social circles or taking dangerous advice on substance use.

The Expertise Gap: Brilliance in Theory, Failure in the Field

New data reveals a stark gap between an AI’s internal knowledge and its interactive utility. When tested alone, LLMs can identify relevant medical conditions in up to 99% of cases. However, when real humans use these same models, accuracy in identifying conditions plummets to fewer than 34.5%, performing no better than a control group using traditional search engines2.

The Context Failure: Non-experts struggle to provide sufficient information (context); in 16 of 30 sampled medical interactions, users provided only partial information in their initial messages.

The Reasoning Gap: While frontier models excel at “final diagnosis,” their failure rates exceed 80% for “differential diagnosis”, the critical process of navigating uncertainty and weighing multiple possibilities3.

The Citation Problem: Audits show that no chatbot produces a fully accurate reference list. Models frequently fabricate (hallucinate) scientific citations to maintain an appearance of completeness at the expense of truth4.

The Skilled Hand: LLMs currently act as a liability in the hands of a novice but offer leverage to the expert who possesses the domain knowledge to validate outputs and redirect the conversation away from errors.

Beyond Medicine: The Risks of the Yeasayer

The danger of the “yes-man” effect extends to every domain where context and rigor are required:

Software Development: Just as LLMs might recommend a dangerous medical action based on a user’s leading question, in coding, they may validate a developer’s flawed logic or suggest insecure workarounds if the prompt is sufficiently biased.

Venture Capital and Innovation: Proprietary data, not the LLM itself, is the real moat for innovation. Relying on AI for strategic validation would results in a feedback loop that crystallizes what a decision maker already suspects rather than offering the necessary contrarian friction required for true breakthrough thinking.

Actionable Insight for the AI Age

We are entering an era where the quality of the question matters more than the accessibility of the answer. Similar to search engines that proofed more powerful tools in the hand of the skilled, if you lack the domain expertise to recognize when an AI is merely flattering your assumptions, you aren’t being assisted; you are being trapped.

Value lies in data purity and intellectual rigor. Do not use AI to confirm your brilliance, use it to pressure-test your flaws.

Chandra et al.: Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesians, 2026, arXiv:2602.19141v1

Bean et al.: Reliability of LLMs as medical assistants for the general public: a randomized preregistered study, nature medicine, 2026, https://doi.org/10.1038/s41591-025-04074-y

Rao et al.: Large Language Model Performance and Clinical Reasoning Tasks, JAMA Network Open, 2026, doi:10.1001/jamanetworkopen.2026.4003

Tiller et al.: Generative artificial intelligence- driven chatbots and medical misinformation: an accuracy, referencing and readability audit, BMJ Openm 2026, doi:10.1136/bmjopen-2025-112695